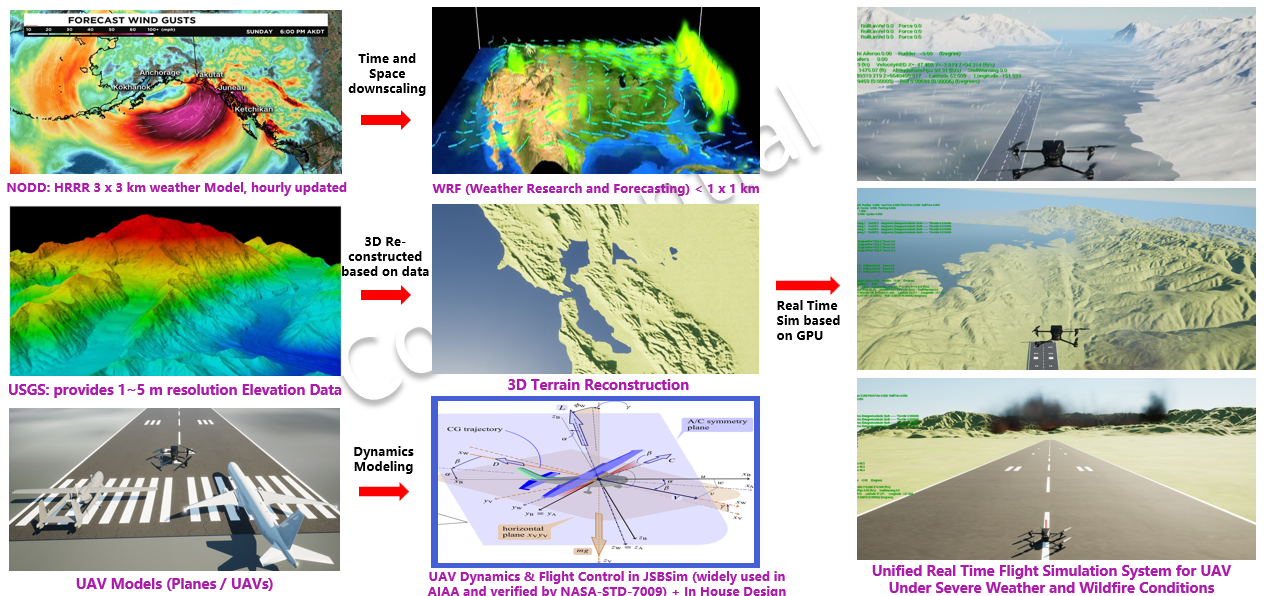

Baseline UAV Scenario

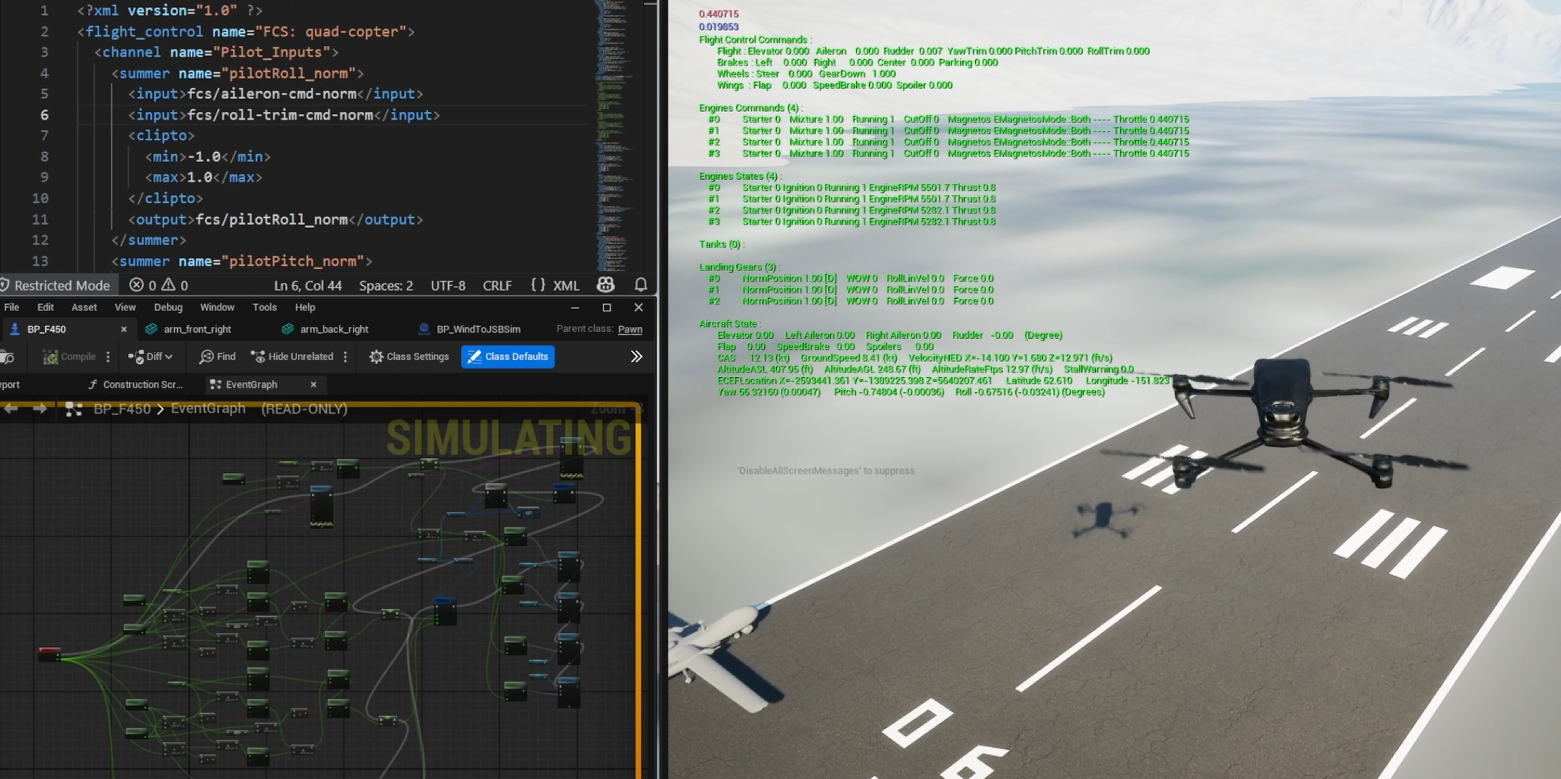

General UAV operation in the simulation environment.

General UAV operation in the simulation environment.

Control stabilization behavior under active SCAS logic.

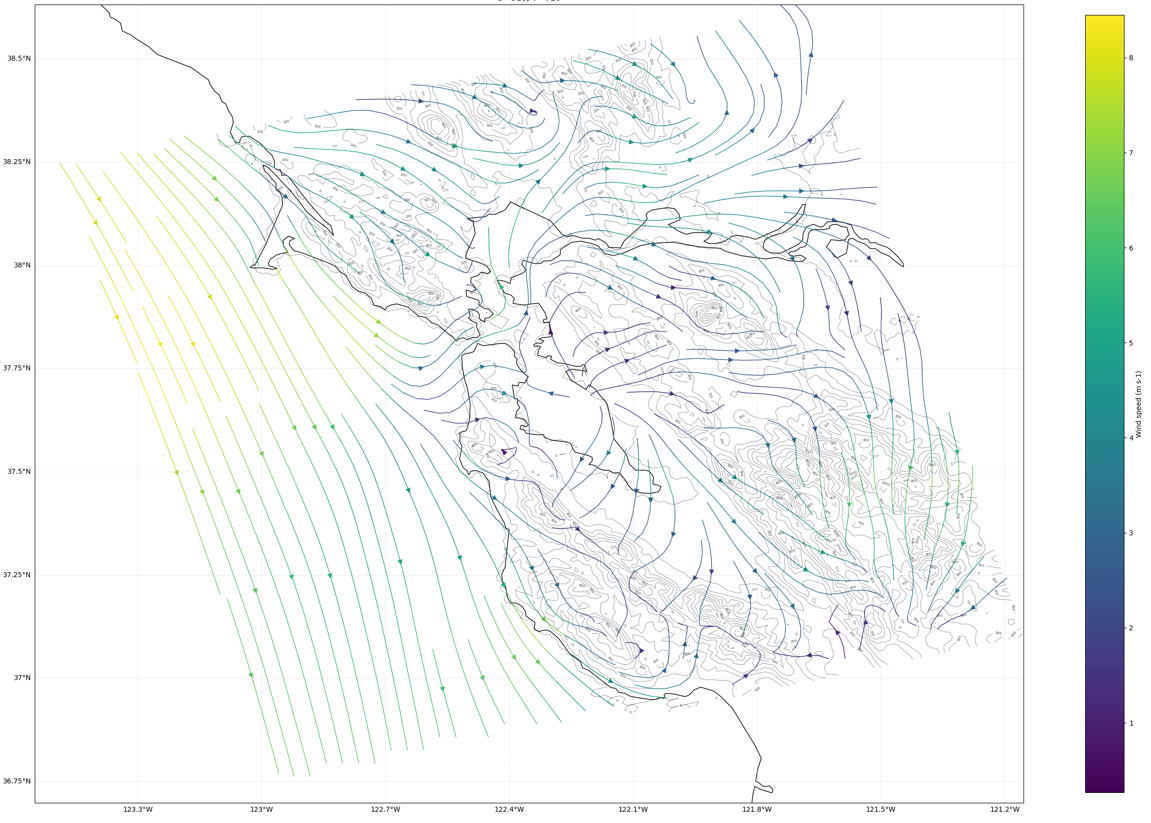

High-wind mission case with terrain-driven disturbances.

Wildfire-influenced airflow and visibility stress testing.